ot so long ago, a scientist might say she could never have too much data. Even today, in a world drowning in data, it is better to be data-rich than data-poor.

But data is not knowledge.

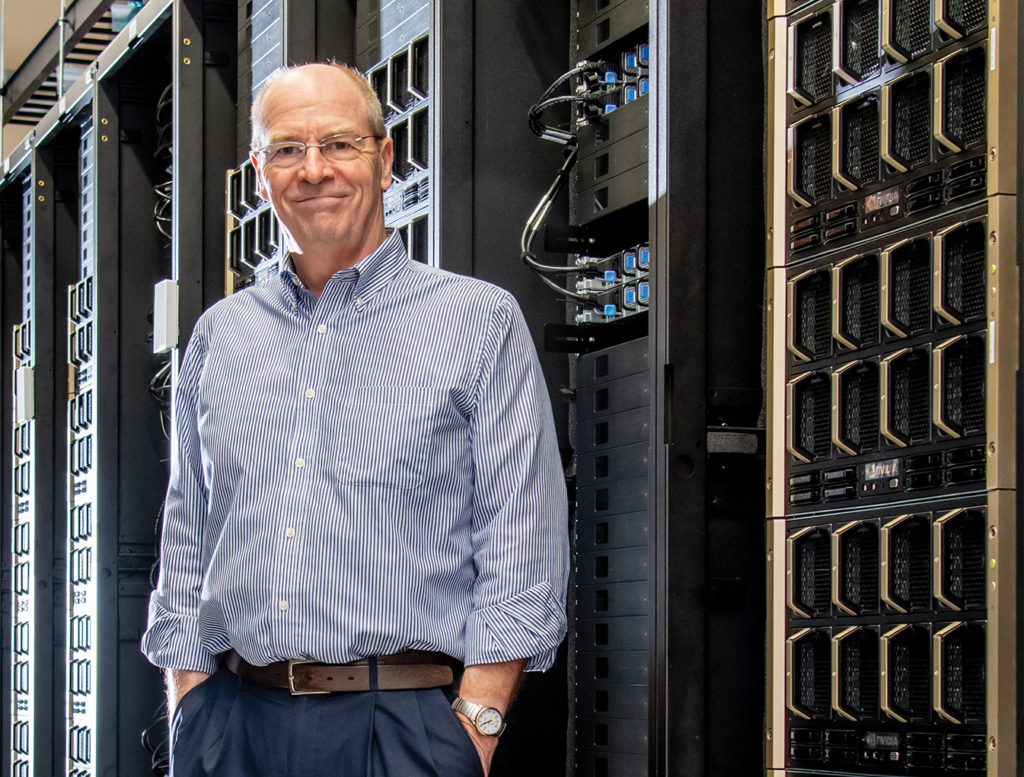

Although data has been called the new oil, a precious resource, finding the relevant in the midst of the irrelevant is a task too big for mere mortals. It takes supercomputing, says University of Florida research computing Director Erik Deumens, to turn data into knowledge.

“That’s where artificial intelligence comes in,” Deumens says. “We’ve been in the age of big data for 20 years now, and the problem is an overwhelming amount of data. How do we sort it, find the correlations, figure out what it all means? Sometimes the data is so complicated and diverse, the human brain just cannot grasp it.”

For AI, however, data is a feast, and supercomputing makes it possible.

UF was already in the forefront of supercomputing with three generations of its high-performance HiPerGator computer. But a gift in 2020 from UF alumnus Chris Malachowsky and NVIDIA, the company he helped found, provided a massive computing boost, and the combination of Hi-PerGator with NVIDIA DGX SuperPOD™ architecture created one of the most powerful supercomputers in all of higher education.

With supercomputing, science — and knowledge generation — can go faster, Deumens says. Science is full of trial and error and a lot of waiting. Scientists put an experiment in motion, wait to see if it works, then try again if it fails. Deumens uses an example from chemistry, one of the departments with which he is affiliated, where chemists often do calculations that take several days.

“That’s where artificial intelligence comes in,” Deumens says. “We’ve been in the age of big data for 20 years now, and the problem is an overwhelming amount of data. How do we sort it, find the correlations, figure out what it all means? Sometimes the data is so complicated and diverse, the human brain just cannot grasp it.”

—Erik Deumens

“Then at the end, they get one number, the energy of their chemical reaction, and they’re very excited about that one number,” Deumens says.

With supercomputing, however, instead of doing one calculation that takes several days and produces one number, chemists can ask the computer to do multiple calculations to look for the best number.

“The AI goes away and does all these calculations, then says this is the best number. In the past when people tried it, it wouldn’t work, but now it gives better numbers faster than any human can. AI gives better, more accurate results. The process gets accelerated by applying AI.”

The more data we collect, the more important it is to make sense of it, says Robert Guralnick, the informatics curator at the Florida Museum of Natural History.

“We cannot human-power our way through data to make sense of the globe. Right now, we’re gathering beyond petabytes of data,” Guralnick says. “We need to speed up our ability to extract the knowledge we need from these streams of data. We need to derive knowledge in a timescale where we can make relevant decisions.”

AI Connections

When you ask around campus who scientists are collaborating with on the AI and machine learning front, one name crops up frequently: Alina Zare.

Zare’s Machine Learning and Sensing Lab stays busy developing algorithms to automate analysis of data from a wide range of sensors, including ground-penetrating radar, LiDAR and hyperspectral and thermal cameras. In the lab, two post-docs, 17 Ph.D. students, two master’s students and a cadre of undergraduates work on projects with collaborators from agronomy, psychology, the Florida Museum, horticulture, entomology, ecology and a host of other computer scientists, both on campus and at other institutions.

Collaborations, Zare says, are a joy. In collaborative work, the convergence of different viewpoints uncovers what is really essential about a problem or dataset, and the teamwork advances both her field and the fields of her collaborators.

Zare, a professor of electrical and computer engineering, uses supercomputing in her work on supervised learning for incomplete and uncertain data. For 19 years, Zare has worked on explosive hazard detection with funding from the Army Research Office and the Office of Naval Research, developing algorithms for sensors to detect underwater explosive devices and landmines.

Sensors help humans see more than they would be able to see with their eyes, Zare says, such as root systems and buried landmines.

Former agronomy department Chair Diane Rowland, who was recently appointed dean of the College of Natural Sciences, Forestry, and Agriculture at the University of Maine, says AI was not part of her research on peanuts until she began collaborating with Zare four years ago. The machine learning capabilities she gained are helping in work to detect peanut toxins that may hide underneath the hulls of harvested peanuts and research on observing root growth without damaging plants.

“Without that collaboration and the gift of the NVIDIA computing power, a lot of this work isn’t possible,” Rowland says. “You can gather all the data in the world, but unless you can analyze it in complex ways, you lose some of the potential answers the data could provide.”

Zare says her lab relies heavily on NVIDIA GPU systems and especially the new DGX A100 system.

The openness of datasets also is important, Zare says. The code and methods for all her projects is shared broadly, the better to advance machine learning while advancing other sciences as well.

Deumens says the most telling example of the collaborative spirit came during the first round of AI Catalyst awards in the summer of 2020. The awards, sponsored by UF Research, are seed funding, a leg up to get good ideas off the ground and generate proof of concept that can attract support from national agencies. Usually when seed funding is announced, it might attract 40 applications, says Deumens, who sits on the review committee that distributed the $1 million fund.

“Last year, we had 133 applications for 20 awards,” says Deumens, “but there were 75 additional proposals that were considered worthy. And almost all of them were cross collaborations with multiple departments to try and do something really new and innovative.”

The 75 additional proposals didn’t get the catalyst funding, but they did get allocations of time on HiPerGator for their research.

“That made clear to me that this campus has a lot of really smart people, and they’re ready and willing to work together to solve really interesting problems in all fields,” Deumens says. “From religion to motion detection in medicine to agriculture to space, you name it.”

And the collaborations extend beyond UF to other campuses and industries.

Electrical and computer engineering Associate Professor Damon Woodard, director of AI partnerships, said UF has established a partnership with the Inclusive Engineering Consortium, which includes 19 historically minority-serving institutions. Also in the works is a partnership to connect NVIDIA researchers to UF researchers to work on common research problems.

Industry days are also planned to bring companies to UF, where their researchers can meet UF researchers who share similar interests. And in the fall, a hackathon is scheduled — with access to IBM’s Watson — to use real-world datasets to tackle climate change and environmental problems.

Vice President for Research David Norton says UF is trying hard to make AI available to anyone with a good AI idea.

“At the end of the day, you can have a great machine, great infrastructure, but if you don’t have the talented people, the faculty, you’re not going to realize gains,” Norton says. “People that have not really done AI before are now jumping into it, and we’re making that possible.

“We’re in a position that would have been unimaginable four years ago.”

Data Center

When the UF Data Center opened on the East Campus in 2013, the foundation was laid for the gift from Malachowsky and NVIDIA that would come seven years later.

The Data Center is connected to the main campus via two 100 gigabit per second links in two separate multi-fiber pathways for redundancy. Three generations of HiPerGator have called it home, and it had room to grow.

The investment in the data center, Deumens says, put UF in a unique position to be able to even accept the gift from Malachowsky and NVIDIA.

“I worked for IBM as a consultant for some years, and one of the things that would happen is IBM would donate a machine to a university, the supercomputer of the day, worth millions of dollars, and six months later not a single job had run on it,” Deumens says. “So I worked to train IT people to use the machine.

“A machine that is $60 million, massive computing capacity, a machine people dream of … you can’t just give that and flip a switch.

“When you add 1.6 megawatts of electrical power, do you have the people who can negotiate these contracts?”

UF did, and spent $15 million upgrading the electrical and cooling capacity, Deumens says. On top of that, Deumens says, much of the work took place during COVID. Many people worked miracles with supply chains to get the racks and pipes and engines and get them all in time to accept the NVIDIA system.

The Research Computing staff, too, seasoned by nearly a decade with HiPerGator, knew how to deal with the almost immediate computing demand. Researchers interact through four login nodes, where at any one time, 400 people are active per node. Hundreds of thousands of jobs a day are processed. In just the first months of this year, the number of accounts rose from 3,500 to 4,000.

Other state institutions have been invited to use the computing capacity for research, and SEC institutions and a list of about 100 other universities nationwide will be able to use it for education. And a collaboration between UF scientists and engineers at NVIDIA has already produced GatorTron™, which crunched data from 10 years of medical records for 2 million patients and 50 million patient interactions to come up with a medical research tool — in just seven days.

Deumens said GatorTron™ is a kind of pilot that shows the power of AI.

“It was an interesting scientific problem, and it required a machine this powerful,” Deumens says.

With 100 faculty hires in AI on the table for the near future, the investment in AI and supercomputing is paying dividends.

“Not everybody can take home a gift like this and be successful at it,” Deumens says.

Intelligent Machines

As the future of AI unfolds, Zare says one of the most important responses from a machine might be three little words: I don’t know.

“We as humans are pretty good at saying ‘I don’t know.’ But most AI systems right now are not,” Zare says. “They always give an answer. If you ask, ‘Is this a pine tree or an oak tree, and you show it a picture of an elephant, it’s going to say, ‘That’s an oak tree,’ for sure.

“Coming up with systems than can say, ‘I don’t know’ is a big challenge and an important one.”

For example, a soldier walking behind an explosive hazard detection system would be safer if a machine said “I don’t know” when it encounters a potentially explosive object, rather than taking a 50-50 guess. In medicine, where AI is on the rise, uncertainty is an important element in diagnoses. If an AI system encounters symptoms it does not expect, it would be better for it not to guess. Zare says the key is to train the system to set aside the inputs it can’t reliably process, so that humans can intervene.

In 50 years, Zare predicts, machines will still need humans to guide them, even as they process more data than ever before. That guidance, she says, can come in many forms. For example, researchers are working on a physics-guided AI in which knowledge about the world can be embedded into the AI system.

“I think we’re really, really far away from having systems that can transfer knowledge from one problem to another, which is something we’re pretty good at as people,” Zare says.

José Principe, the director of the National Science Foundation-funded Center for Big Learning, says achieving human-level intelligence using engineering and computer science methodologies is a realistic challenge that will just take time. He agrees with Zare that despite advances, computer-human interaction should be synergistic.

“We know how to teach computers to use data to make decisions in well-defined domains. We can teach machines to recognize images, speech and sound. But there are many other areas that are beyond the current capabilities of AI,” Principe says.

One example of computers making decisions is self-driving vehicles, which encounter uncertainty and conflicting evidence. In an ideal world, Principe says, autonomous vehicles would be tested in a virtual reality environment where they could crash with no consequences, and learn from mistakes.

“Unfortunately, we don’t have simulators for the real world. At a certain point, researchers decide they have enough confidence in their algorithms to take them into the field. And we are exactly at that point, right? Some things work very well, but others the machine cannot predict. As long as the machine is better than the average driver, society probably gains something.”

Principe started his career applying technology to medicine. Today, he’s done a 180-degree turn, and uses biology to inform technology in his Computational NeuroEngineering Laboratory, where he works on brain-machine interfaces, among other things. He is working on creating mathematical frameworks that represent cognitive processes so that they can be programmed in computers.

“In the past seven years, I’ve learned quite a bit about cognitive science, and I’m fascinated by the way the brain organizes past experience.”

Still, he says, science is a long way from mimicking human intelligence, so fears about AI, today at least, are unfounded.

“We still don’t know everything the brain does. We have all these fears of things that don’t exist and probably never will.”

—José Principe

“We still don’t know everything the brain does,” Principe says. “We have all these fears of things that don’t exist and probably never will.”

From the earliest technologies — the bow and arrow, for instance — Principe says humans have wrestled with sets of societal rules for controlling their use. AI is no different.

“There are things we can do that perhaps we shouldn’t do,” Principe says. “My concern is that the speed of technology innovation is exponentially increasing, but our societal decision making is not.”

Deumens agrees and says students and researchers will benefit from UF’s interdisciplinary approach to AI, which goes beyond feats of engineering.

“UF is trying to educate everybody about all aspects of AI, so that when our students graduate, they are not necessarily computer scientists, but they understand what AI is and how to use it ethically,” Deumens says.

Guralnick points out that AI can build into algorithms the very biases that have been problematic for society for a long time. That makes it important to be thoughtful about the ethics of AI, even as we embrace the technical advances. Computer scientists can’t tackle AI alone.

“We need to keep social good in the forefront of AI. For me, a social good is clean water, a healthy environment and a playing field that allows success to happen. We need holistic problem-solving.

“There is not just one problem to solve in our society, there are hundreds, and they’re all interconnected,” Guralnick says. “Everyone’s sort of solving their piece, but what is so cool about the AI initiative at UF is that there’s enough people all recognizing the interconnectedness. AI as a partnership asks how we can solve many problems in a connected way across our university.

“I’m so proud of the institution for recognizing that this isn’t just about computer science.”

This story was originally published by the University of Florida